I have found myself using UNION in MySQL more and more lately. In this example, I am using it to speed up queries that are using IN clauses. MySQL handles the IN clause like a big OR operation. Recently, I created what looks like a very crazy query using UNION, that in fact helped our MySQL servers perform much better.

With any technology you use, you have to ask yourself, "What is this tech good at doing?" For me, MySQL has always been excelent at running lots of small queries that use primary, unique, or well defined covering indexes. I guess most databases are good at that. Perhaps that is the bare minimum for any database. MySQL seems to excel at doing this however. We had a query that looked like this:

select category_id, count(*) from some_table

where

article_id in (1,2,3,4,5,6,7,8,9) and

category_id in (11,22,33,44,55,66,77,88,99) and

some_date_time > now() - interval 30 day

group by

category_id

There were more things in the where clause. I am not including them all in these examples. MySQL does not have a lot it can do with that query. Maybe there is a key on the date field it can use. And if the date field limits the possible rows, a scan of those rows will be quick. That was not the case here. We were asking for a lot of data to be scanned. Depending on how many items were in the in clauses, this query could take as much as 800 milliseconds to return. Our goal at DealNews is to have all pages generate in under 300 milliseconds. So, this one query was 2.5x our total page time.

In case you were wondering what this query is used for, it is used to calculate the counts of items in sub categories on our category navigation pages. On this page it's the box on the left hand side labeled "Category". Those numbers next to each category are what we are asking this query to return to us.

Because I know how my data is stored and structured, I can fix this slow query. I happen to know that there are many fewer rows for each item for article_id than there is for category_id. There is also a key on this table on article_id and some_date_time. That means, for a single article_id, MySQL could find the rows it wants very quickly. Without using a union, the only solution would be to query all this data in a loop in code and get all the results back and reassemble them in code. That is a lot of wasted round trip work for the application however. You see this pattern a fair amount in PHP code. It is one of my pet peeves. I have written before about keeping the data on the server. The same idea applies here. I turned the above query into this:

select category_id, sum(count) as count from

(

(

select category_id, count(*) as count from some_table

where

article_id=1 and

category_id in (11,22,33,44,55,66,77,88,99) and

some_date_time > now() - interval 30 day

group by

category_id

)

union all

(

select category_id, count(*) as count from some_table

where

article_id=2 and

category_id in (11,22,33,44,55,66,77,88,99) and

some_date_time > now() - interval 30 day

group by

category_id

)

union all

(

select category_id, count(*) as count from some_table

where

article_id=3 and

category_id in (11,22,33,44,55,66,77,88,99) and

some_date_time > now() - interval 30 day

group by

category_id

)

union all

(

select category_id, count(*) as count from some_table

where

article_id=4 and

category_id in (11,22,33,44,55,66,77,88,99) and

some_date_time > now() - interval 30 day

group by

category_id

)

union all

(

select category_id, count(*) as count from some_table

where

article_id=5 and

category_id in (11,22,33,44,55,66,77,88,99) and

some_date_time > now() - interval 30 day

group by

category_id

)

union all

(

select category_id, count(*) as count from some_table

where

article_id=6 and

category_id in (11,22,33,44,55,66,77,88,99) and

some_date_time > now() - interval 30 day

group by

category_id

)

union all

(

select category_id, count(*) as count from some_table

where

article_id=7 and

category_id in (11,22,33,44,55,66,77,88,99) and

some_date_time > now() - interval 30 day

group by

category_id

)

union all

(

select category_id, count(*) as count from some_table

where

article_id=8 and

category_id in (11,22,33,44,55,66,77,88,99) and

some_date_time > now() - interval 30 day

group by

category_id

)

union all

(

select category_id, count(*) as count from some_table

where

article_id=9 and

category_id in (11,22,33,44,55,66,77,88,99) and

some_date_time > now() - interval 30 day

group by

category_id

)

) derived_table

group by

category_id

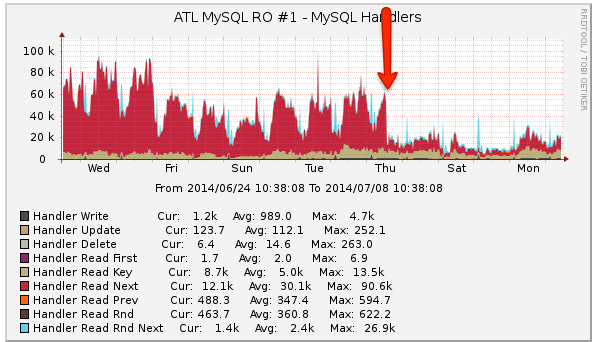

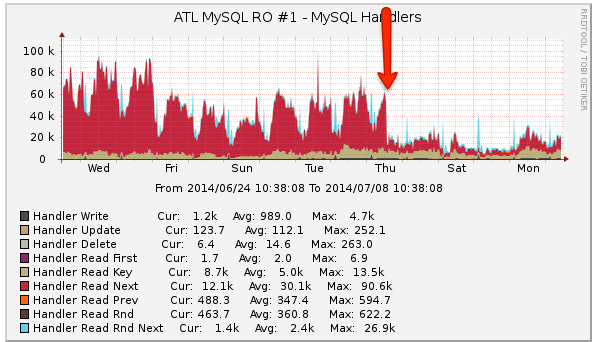

Pretty gnarly looking huh? The run time of that query is 8ms. Yes, MySQL has to perform 9 subqueries and then the outer query. And because it can use good keys for the subqueries, the total execution time for this query is only 8ms. The data comes back from the database ready to use in one trip to the server. The page generation time for those pages went from a mean of 213ms with a standard deviation of 136ms to a mean of 196ms and standard deviation of 81ms. That may not sound like a lot. Take a look at how much less work the MySQL servers are doing now.

The arrow in the image is when I rolled the change out. Several other graphs show the change in server performance as well.

The UNION is a great way to keep your data on the server until it's ready to come back to your application. Do you think it can be of use to you in your application?